Author

Harley Sugarman

Published on

I’ve sat through the training.

Moved the window to my second monitor. Watched the progress bar crawl across the screen. Clicked through the quiz. Got back to whatever I was actually supposed to be doing.

I passed. Nothing changed.

I was a developer at the time, which should have made me more invested than most. If anyone was supposed to care about security training, it was probably me. And still, I pushed it to the background.

That experience is a big part of why I started Anagram. But I want to talk about something the security awareness industry would rather not examine too closely:

a lot of this training isn’t working

a lot of the people running security awareness programs know that, and

the category still leans on metrics that make the problem easier to ignore.

The metric we use to lie to ourselves

Completion rate.

Did employees finish the module? Check. Are you compliant? Great. Move on.

Completion rate isn’t meaningless. It tells you whether people sat through the assignment. What it doesn’t tell you is whether they’ll make a better decision when something suspicious shows up in their inbox.

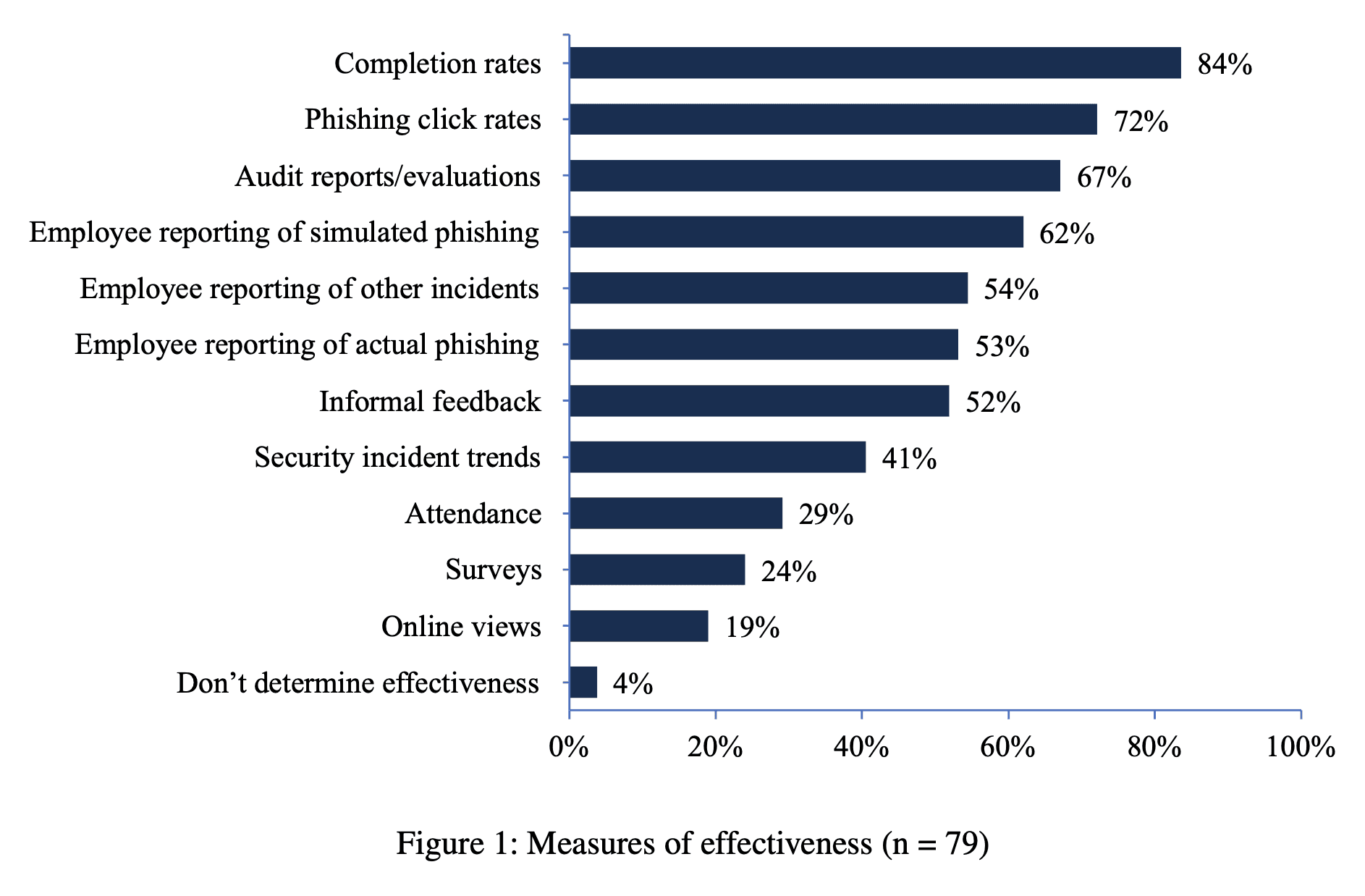

A lot of awareness programs still treat completion as the main proof that training worked. A NIST study of U.S. government security awareness programs found that completion rate was the most common measure of effectiveness at 84%.

Some teams also tracked basic behaviors, like whether employees reported phishing simulations. Far fewer looked at security incident trends, a much closer proxy for whether the program is actually reducing risk.

And when researchers looked more directly at whether annual training changes outcomes, the picture didn’t get any prettier. A UC San Diego Health study of more than 19,500 employees found no significant relationship between recently completing annual security training and phishing susceptibility during the eight-month study period.

People finished the module. They still clicked the link.

Part of me still wanted to believe the old model could be salvaged. That study mostly killed that idea.

Why this is your problem, not your employees’

When I ask CISOs whether they think their awareness program is working, most of them pause before answering.

That pause has been one of the most useful data points I’ve collected since starting this company.

Because they already know the answer.

They know annual training is tolerated, not valued. They know the content is generic. They know most employees are moving it to a second monitor the moment it starts. And they keep running it anyway, because “everyone completed the training” is still easier than “we changed behavior”.

The other issue is that training is one of the only parts of a security program every employee actually sees. Most security work is invisible to the rest of the company. Threat detection is invisible. Vulnerability management is invisible. Access controls are (mostly) invisible. The awareness program is not.

Everyone sees it. Everyone forms an opinion.

And that opinion doesn’t stay neatly contained to the module itself. If the main thing employees experience from the security team is tedious, generic, checkbox-driven training, that shapes how they think about security more broadly. It affects whether they take security seriously. It affects whether they report something suspicious. It affects how much credibility the team has when something actually urgent happens.

You may not be trying to train your organization to tune security out.

But if your program has looked the same for years, that may be exactly what you’ve done.

What actually tells you something

If you want to know whether your program is working, start with behavior.

Reporting rate is where I’d start if you could only pick one metric. What percentage of employees actively report suspicious emails or messages? That tells you someone noticed something, recognized the risk, and did something with it. Completion tells you none of that.

The NIST Phish Scale matters more than raw click rate. A single point-in-time score is easy to manipulate by changing the difficulty of the simulation. The real question is whether your organization is getting better at spotting more sophisticated attacks over time.

Time to report matters too. Speed changes the outcome. The faster someone flags a suspicious message, the faster the security team can investigate and contain it. That is a far more operationally useful signal than a dashboard showing that 98% of employees completed a module at some point in Q3.

Those are the numbers I’d want in front of me.

Why the category ended up here

To be fair, vendors didn’t invent this training structure out of nowhere.

The market asked for compliance. Auditors asked whether employees finished training. Boards wanted a clean number that could fit in a slide. Security teams wanted a product that was easy to roll out, easy to document, and easy to defend.

So the category optimized around the metric everyone could live with.

That’s how you end up with an industry full of products designed to maximize completion instead of behavior change. It’s also why if the underlying goal is still “make this easy to assign, easy to finish, and easy to prove,” you usually end up in the same place.

What I think works better

The kind of training that would have changed my behavior as a developer was never going to be a longer video or a harder quiz.

It would have been shorter. More relevant. Closer to the kinds of decisions I actually had to make. It would have respected my time and shown me something I might realistically run into, instead of forcing me through generic examples built for nobody in particular.

That’s the problem we built Anagram around.

Shorter modules. Training that reflects the role. Scenarios that feel like the kinds of situations people actually run into. Reporting built around behavior, not box-checking. The product starts from a simple idea: if training is annoying, generic, and disconnected from real work, most people will treat it like mandatory background noise.

I use a pretty basic filter for product decisions: would I have hated this as an employee?

If the answer is yes, we don’t ship it.

If your program is mostly a compliance exercise and you want to see what the alternative looks like, we're worth thirty minutes of your time.

© 2026 Enigma Analytics, Inc.

All rights reserved.