Author

Harley Sugarman

Published on

The easiest way to misunderstand phishing is to picture the wrong person clicking.

So picture the right one.

A senior engineer gets a phishing email on a Tuesday afternoon.

She's been working in security-adjacent roles for eight years. She flags suspicious emails for her team. She knows what a phishing attempt looks like.

The email is from a travel platform she used a few months back for a trip she's still thinking about. It says there's been unusual activity on her account. The sender address checks out. The logo matches. The copy is identical to every real email she's ever received from that company.

She clicks before she's thought about it.

Three seconds, start to finish.

Her first instinct is embarrassment. That instinct is wrong, and the fact that it's the automatic response is part of why organizations keep misreading this problem.

The assumption inside every incident report

When someone clicks a phishing link, the response inside most organizations follows the same pattern. There's the incident report. There's the remedial training. And underneath all of it, there's an assumption that rarely gets questioned: that a smarter, more careful person would have caught it.

That assumption is wrong, and it's expensive.

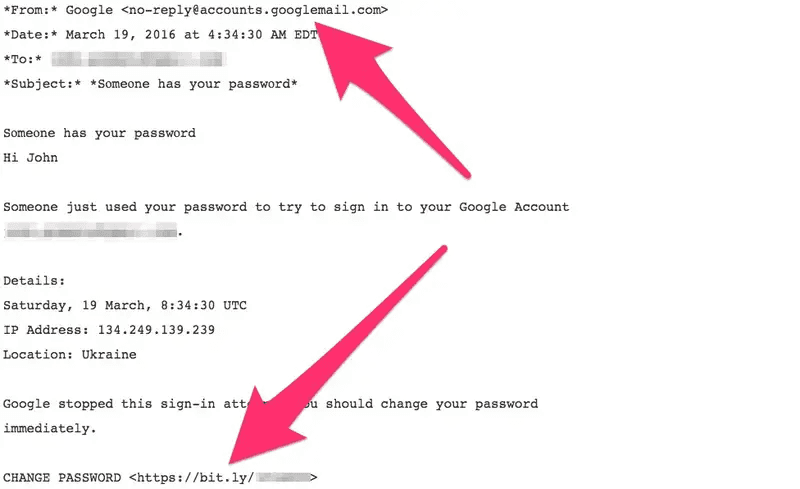

John Podesta, Hillary Clinton's campaign chairman, had his email compromised in 2016 because someone on his team clicked a phishing link.

Source: https://www.businessinsider.com/hillary-clinton-campaign-john-podesta-got-hacked-by-phishing-2016-10

The email claimed his Google account had been accessed from Ukraine. His team flagged it to their IT contractor as suspicious. The contractor responded that it was "legitimate," a typo, he later said, for "illegitimate." Podesta clicked. Tens of thousands of emails were exposed.

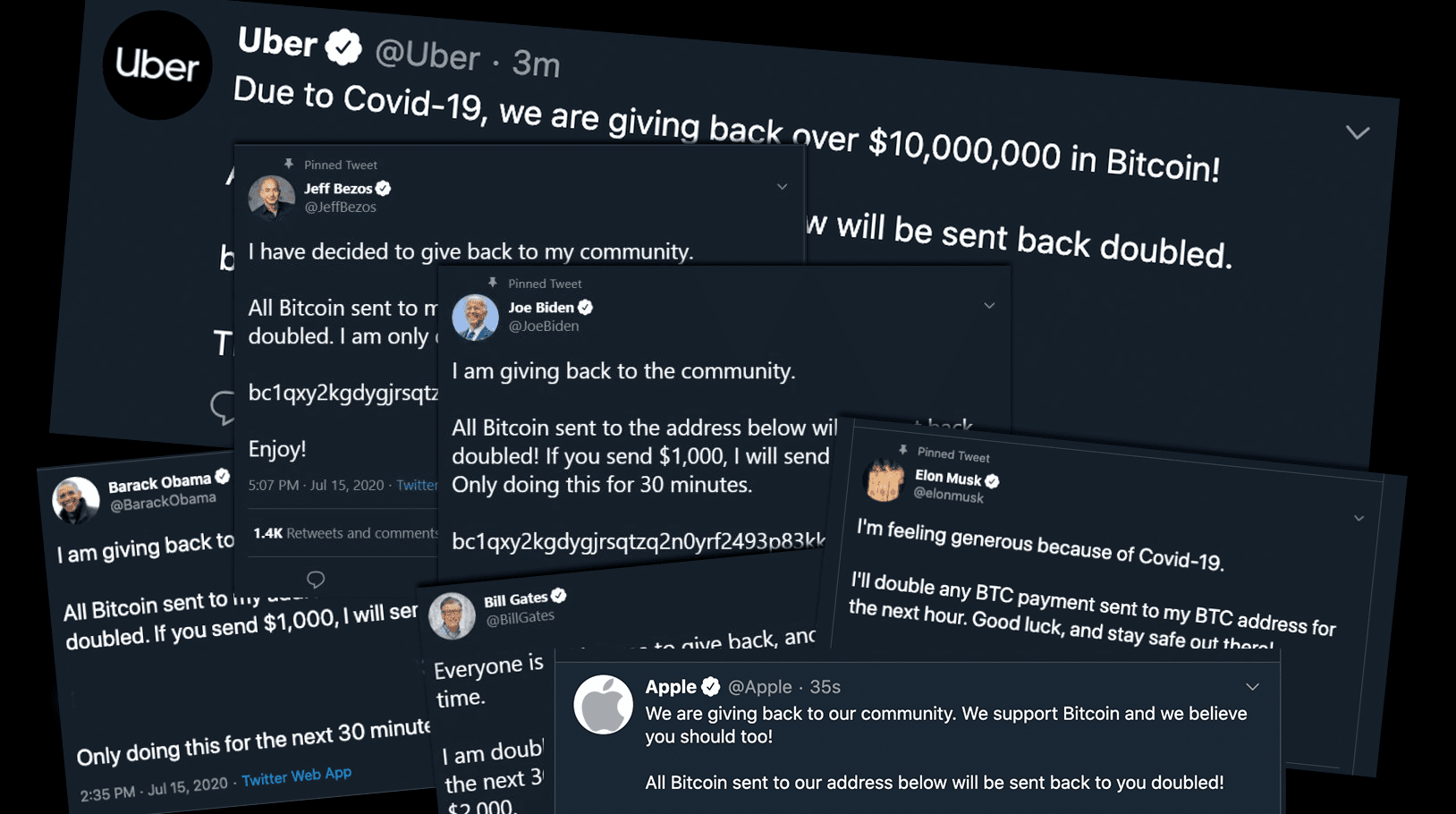

In July 2020, a group of teenagers compromised Twitter's internal systems in an afternoon. They didn't find a technical vulnerability. They called Twitter employees, impersonated internal IT support, and talked their way into admin tools.

Within hours they had hijacked the accounts of Barack Obama, Joe Biden, Elon Musk, and Apple to run a Bitcoin scam.

Source: https://threatcop.com/blog/twitter-breach/

The employees who handed over access worked at one of the most technically sophisticated companies in the world.

Attackers don't win by finding careless people. They win by finding the right moment.

What attackers understand about decision-making

When I started building Anagram, I spent a lot of time thinking about why some products actually change behavior and most training does not.

The principles are not complicated. Relevance pulls people in. Feedback reinforces behavior. Repetition builds habit.

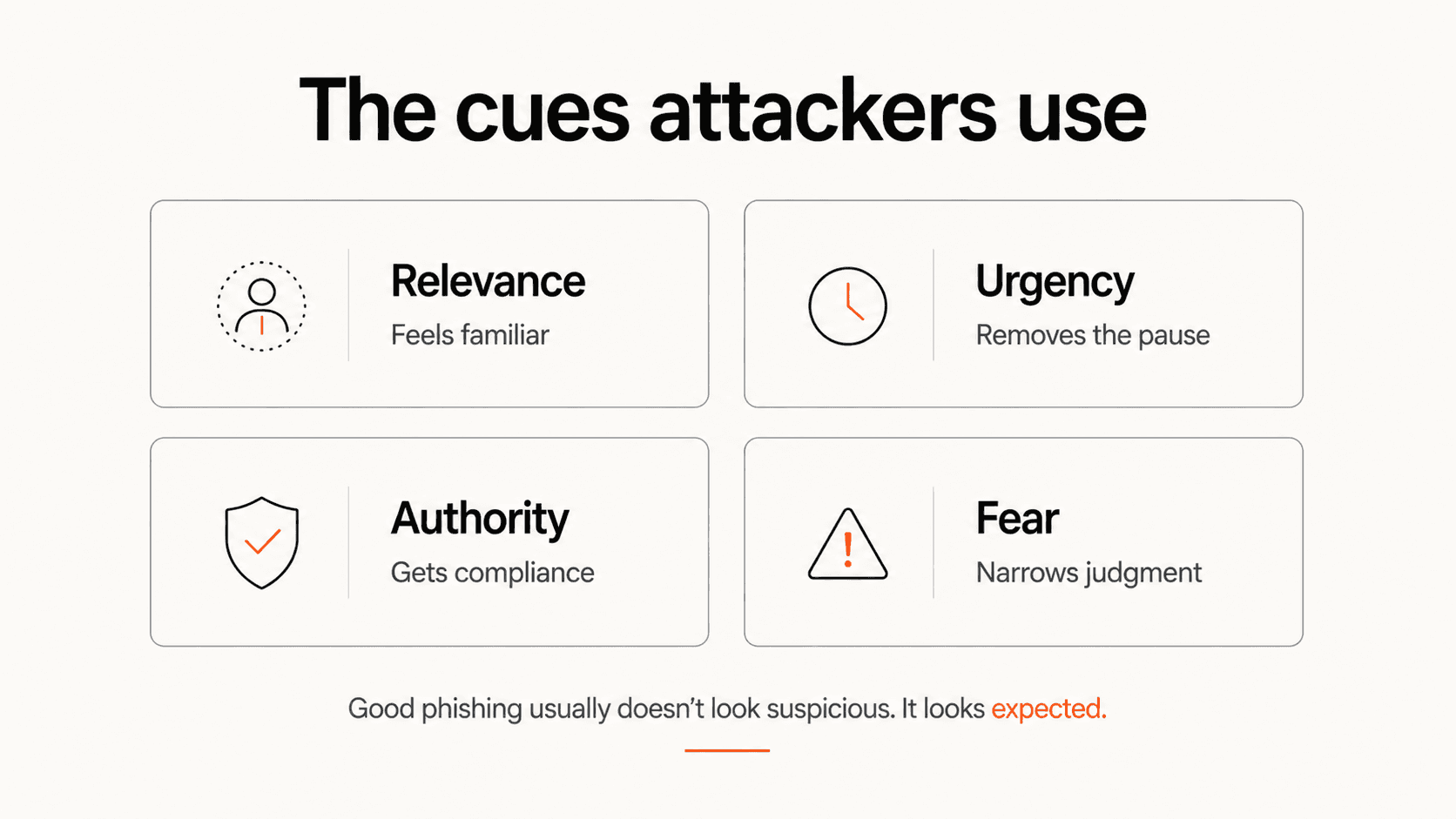

Attackers use the same principles, inverted. Because the best phishing emails look familiar.

A vendor you know. A tool you use. A manager you trust. A deadline that feels real.

That familiarity lowers your guard. The email passes through the filter that exists to flag the unfamiliar, because the signal it sends is exactly the one your brain is trained to trust.

Urgency does the next part. "Your account will be suspended in 24 hours" isn't meant to make you panic in some dramatic way. It's meant to move you into action before the slower part of your brain has time to check.

Authority makes the request feel normal. If something appears to come from your CEO, your IT department, your bank, or a vendor you already work with, the default in most functional systems is to comply first and verify later. Most of the time, that shortcut works. Attackers redirect it.

None of these are flaws in the people who fall for them. They're features of how human beings process information under pressure.

Attackers have learned to exploit them with increasing precision.

Why AI has changed the math

For most of phishing's history, personalization was the limiting factor.

A targeted attack, one that referenced your specific role, your manager's name, the conference you attended last month, or the vendor your team uses, required research by a human being. That research took time, which meant targeted attacks were reserved for high-value targets.

AI breaks that constraint wide open.

The most important change isn't that phishing emails are better written, although they are. The bigger change is that attackers can now manufacture familiarity cheaply.

A message that would have taken a skilled attacker hours to craft can now be generated in seconds. The details that make an attack feel legitimate, like your job title, your recent activity, the post you made on LinkedIn three weeks ago, or the tool your company recently rolled out, are public or semi-public information that a model can pull together with very little human effort.

What used to be a targeted campaign against thirty executives can now become a targeted campaign against thirty thousand employees.

The economics have changed. And since you only need one person to act, the return on that scale is significant.

The attacks reaching employees today are easier to personalize, harder to spot, and cheaper to produce than the phishing programs most companies were built to handle.

Many security programs still look like they were built for an older version of the problem.

Why "be more careful" doesn't work

The instinct after an incident is understandable. Someone clicked something they shouldn't have. Remind people to pay closer attention, to think before they click, to stay vigilant.

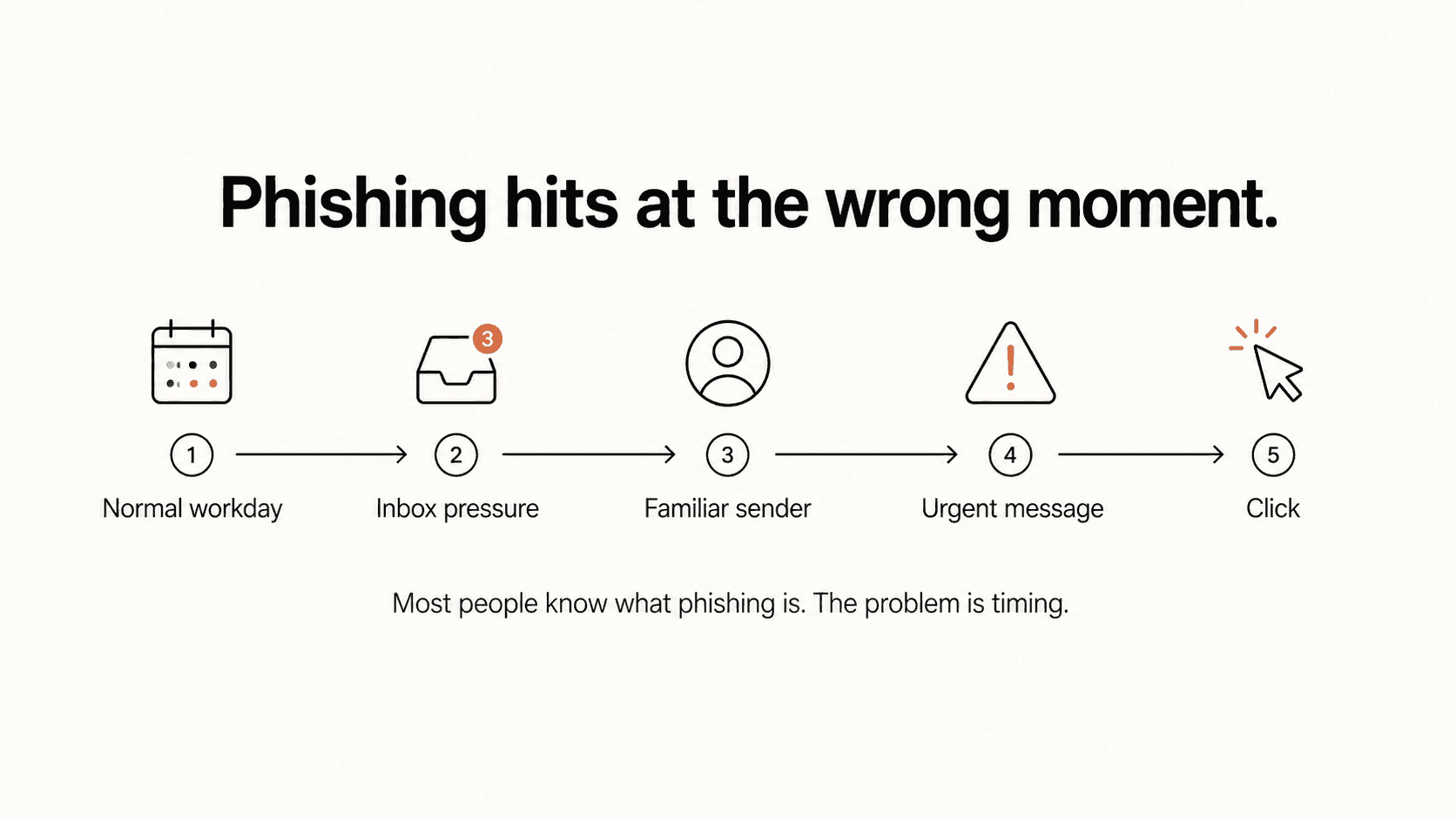

The problem is that vigilance is not a stable cognitive state.

Nobody can treat every email, Slack message, calendar invite, vendor notification, and phone call like a forensic investigation. That level of suspicion collapses under normal work. You can sustain heightened attention for a while. Then normal cognition reasserts itself, because you have a job to do.

Attackers don't need you to be careless all day. They need one moment when you're deep in a task, tired, rushing between meetings, or trying to clear your inbox before a deadline.

None of those are failures of character. They're Tuesday afternoon.

And Tuesday afternoon is when a well-timed phishing email does its best work.

The "gotcha" model of phishing simulation has the same structural problem. You send fake phishing emails, catch the people who click, assign remedial training. There's some value in that as a diagnostic tool. As a training mechanism, it produces resentment more than behavior change.

Employees who feel tricked by their own security team don't become more vigilant. They disengage from the program.

That is why completion is such a weak proxy for security behavior. It tells you the module happened. It does not tell you whether someone will pause at the moment that matters.

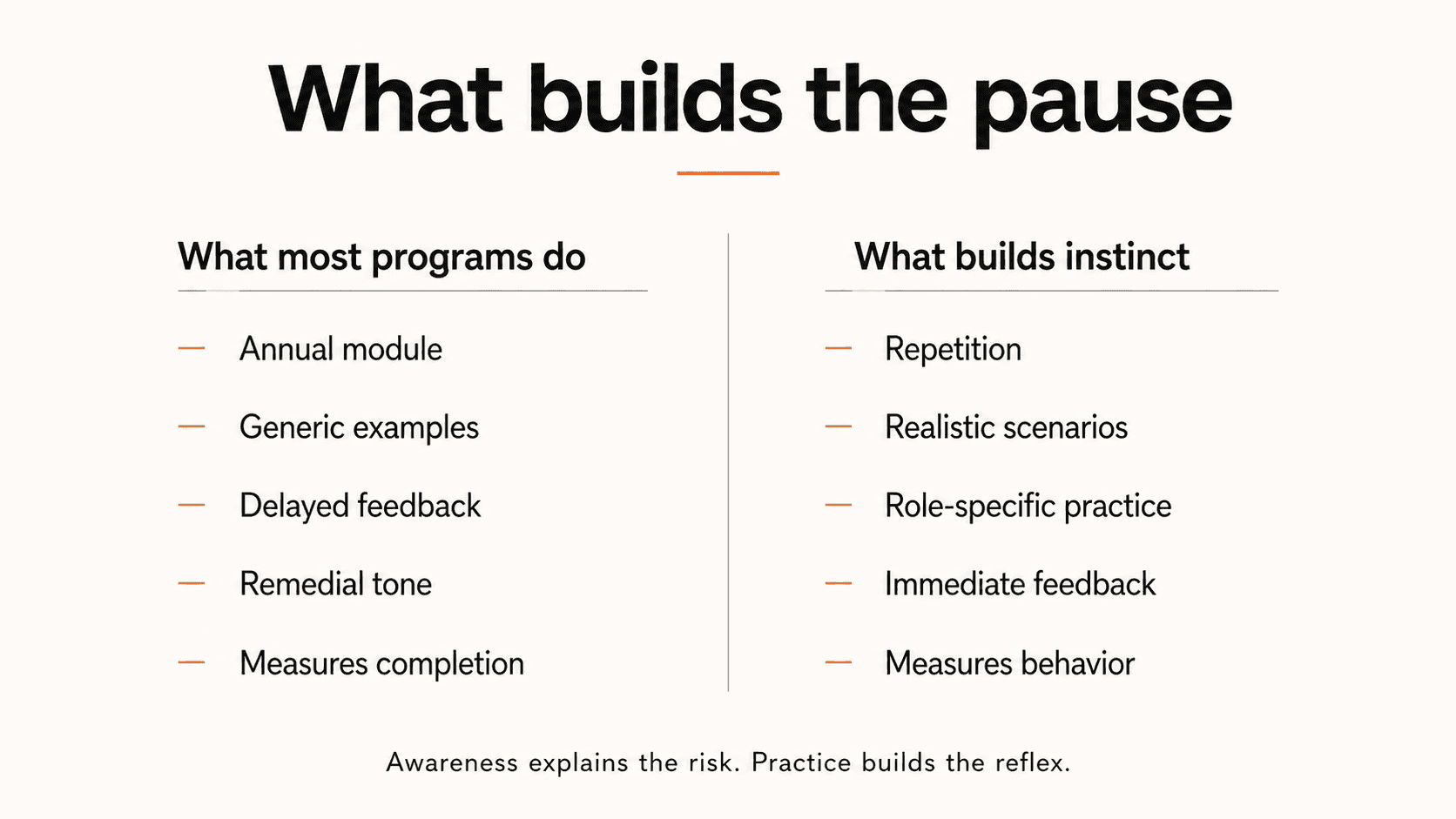

What actually builds the pause

If attackers exploit cognitive patterns, training has to work at the level of cognitive patterns.

A 45-minute annual module can teach people what phishing is. It doesn't train the reflex to slow down at the exact moments when slowing down is hardest: when something feels urgent, when it appears to come from authority, when it references something familiar.

What builds that reflex is repetition, in realistic conditions, over time.

The specifics vary by role. Finance, engineering, executives, and frontline teams are not exposed to the same attacks, which is why finance and engineering should not be getting the same security training.

The point is simple: training has to match the moments where people are most likely to make the wrong decision.

The same is true of feedback. A generic remedial module after someone clicks tells them they failed. Immediate, specific feedback tells them what cue they missed and what to look for next time.

The goal is not to make employees recognize just one phishing email.

The goal is to build the habit of pausing.

Half a second of friction before acting, at the exact moments attackers are designed to eliminate that pause.

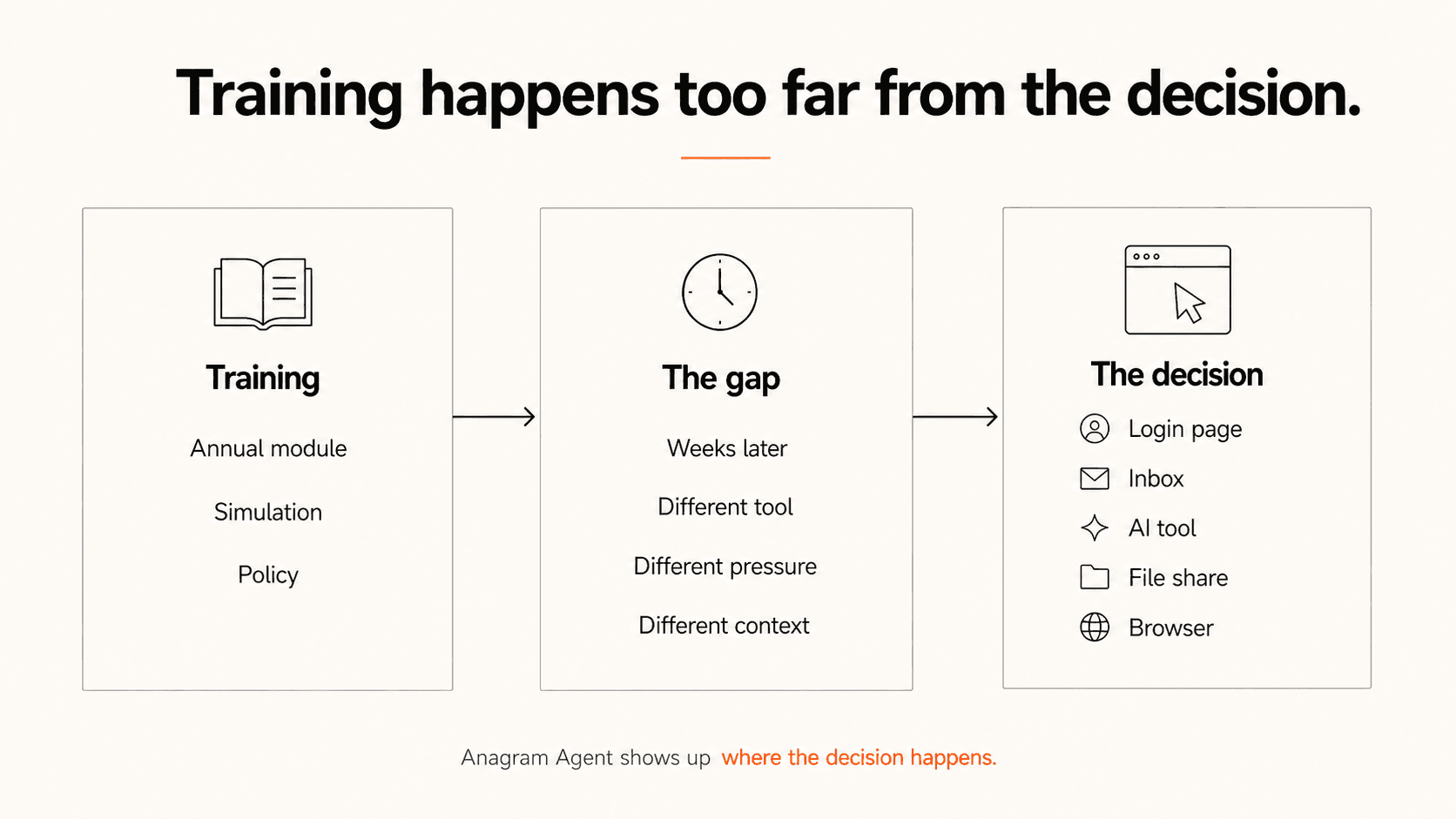

Why the moment matters

Most security training happens far away from the decision it is supposed to shape.

An employee takes a module in March, then faces a convincing vendor email in July. A developer watches a lesson about secrets, then pastes sensitive context into an AI tool three weeks later. A finance lead completes a phishing simulation, then gets a vendor change request during month-end close.

The risky decision happens inside the workflow.

The guidance usually happens somewhere else.

That gap is one of the reasons we built Anagram the way we did. Training matters, but it works better when it is reinforced by small moments of friction at the point of behavior.

If someone is about to enter credentials on a suspicious page, they don't need a 45-minute refresher on phishing. They need a warning that makes them pause.

If a developer is about to paste sensitive code or customer data into an AI tool, they don't need a quarterly lecture about data handling. They need a nudge in the workflow where that decision is being made.

If an employee is about to overshare a file, approve a risky browser action, or respond to a message carrying the usual social engineering cues, the best intervention is often small.

A pause.

A reason to check.

A way for the slower part of the brain to come back online before the action becomes automatic.

That is the job Anagram Agent is built for.

It is meant to close the distance between what people learn and where they need to use it.

Because the hardest part of security awareness is not understanding the concept. Most employees understand the concept.

The hard part is remembering it at the exact moment an attacker is trying to make them forget.

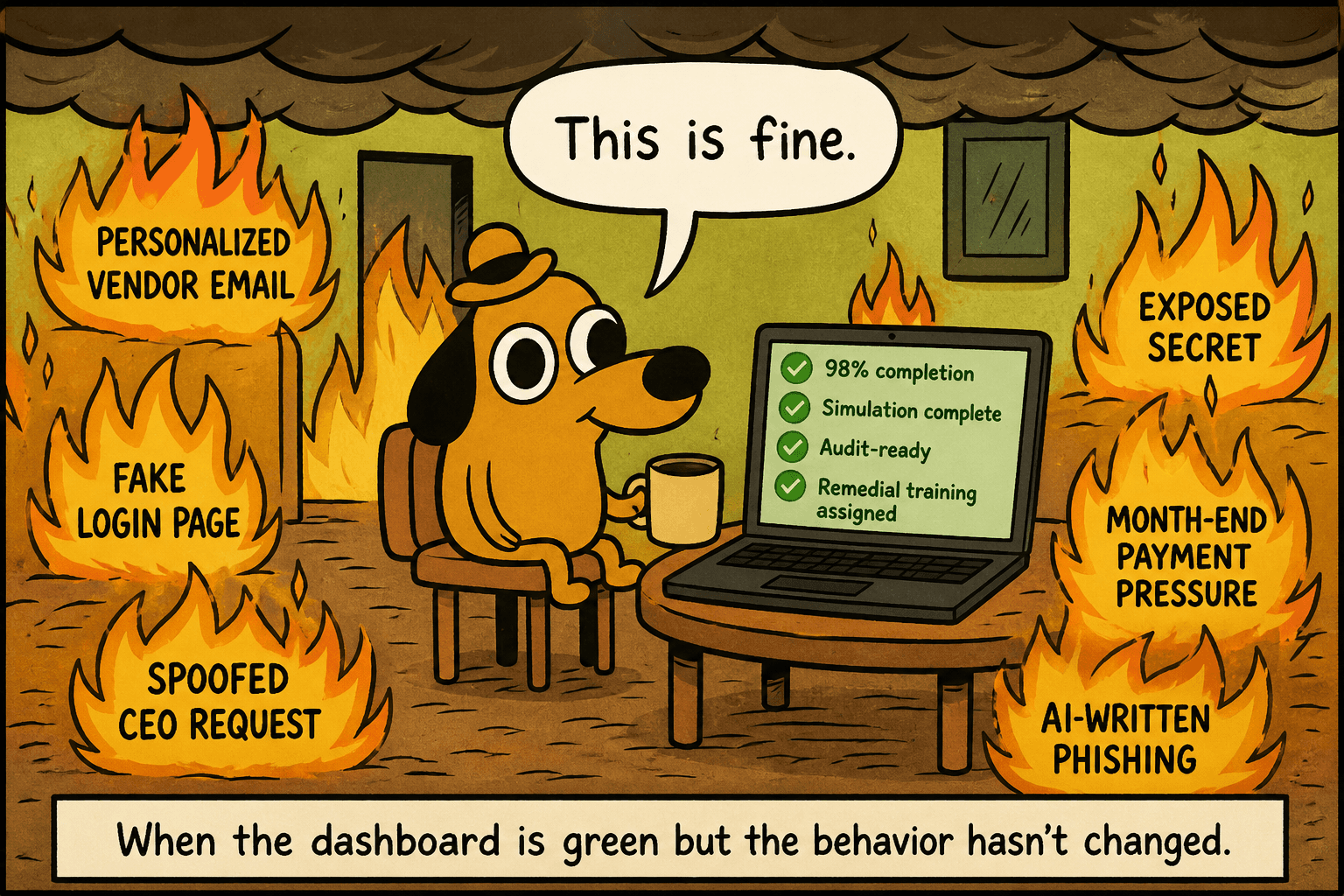

What this means for your phishing program

If your phishing program is built around annual modules, occasional simulations, completion reports, and remedial training after someone clicks, it may be measuring activity more than resistance.

That is a reasonable place to start.

It is not a great place to stop.

A completion report can tell you who finished the module. A simulation can tell you who clicked the test. Neither one tells you whether the program is building the reflex your employees need when the message is personalized, the timing is good, and the request feels normal.

That is the uncomfortable part of phishing training. The test environment and the attack environment are not the same.

Employees may understand phishing in the abstract and still miss it in the moment. They may pass the quiz and still trust the wrong signal. They may know the policy and still act too quickly when the request arrives during the worst possible part of the day.

The question is not whether employees know phishing exists.

They do.

The better question is whether your program has trained the pause in the moments where attackers are trying hardest to remove it.

The next time someone on your team clicks

You'll have a choice in how you frame it.

You can treat it as a failure of that person's judgment. Run the incident report, assign the module, move on, and wait for the next one.

Or you can treat it as information: about which triggers are being exploited in your organization, which teams are most exposed, and whether your training is building resistance that maps to how these attacks work in practice.

One of those framings leads somewhere.

"Be more careful" is what organizations say when they don't want to redesign the system.

The better question is where the person was, what pressure they were under, what signal they trusted, and whether the program had trained the pause before that moment arrived.

The human brain hasn't changed. The attacks have.

That gap is where breaches happen.

If your phishing program still relies on annual training and post-click remediation, we can show you how Anagram helps teams build the pause before the risky action happens.

© 2026 Enigma Analytics, Inc.

All rights reserved.